Binary and Scalar Embedding Quantization for Significantly Faster & Cheaper Retrieval

About this article

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

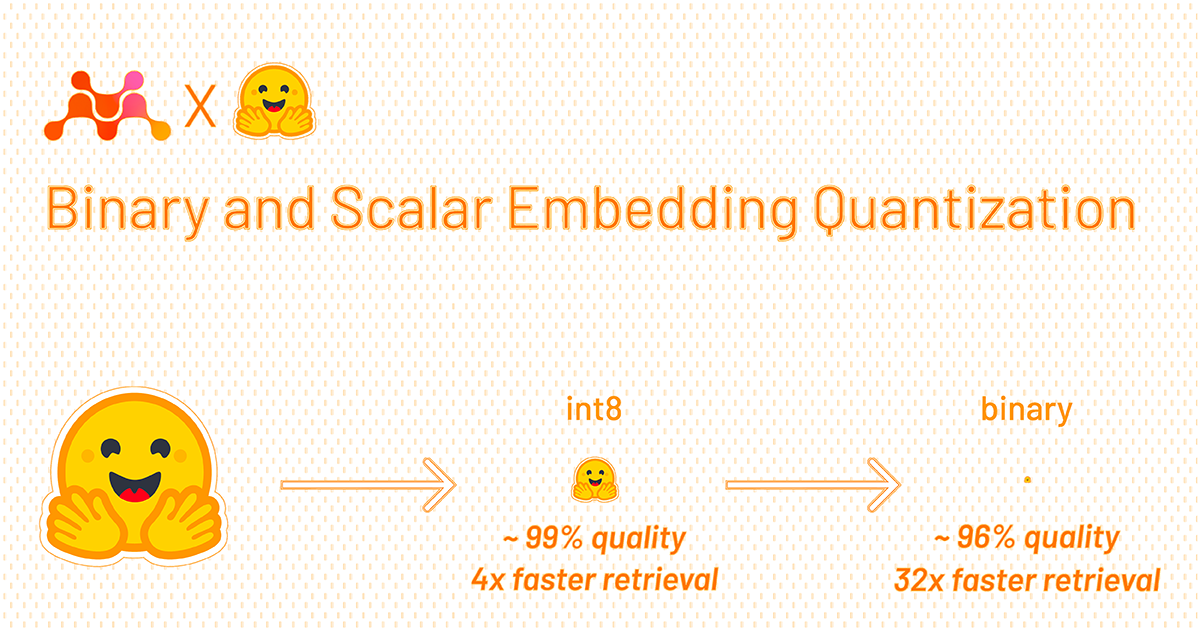

Back to Articles Binary and Scalar Embedding Quantization for Significantly Faster & Cheaper Retrieval Published March 22, 2024 Update on GitHub Upvote 128 +122 Aamir Shakir aamirshakir Follow guest Tom Aarsen tomaarsen Follow SeanLee SeanLee97 Follow guest We introduce the concept of embedding quantization and showcase their impact on retrieval speed, memory usage, disk space, and cost. We'll discuss how embeddings can be quantized in theory and in practice, after which we introduce a demo showing a real-life retrieval scenario of 41 million Wikipedia texts. Table of Contents Why Embeddings? Embeddings may struggle to scale Improving scalability Binary Quantization Binary Quantization in Sentence Transformers Binary Quantization in Vector Databases Scalar (int8) Quantization Scalar Quantization in Sentence Transformers Scalar Quantization in Vector Databases Combining Binary and Scalar Quantization Quantization Experiments Influence of Rescoring Binary Rescoring Scalar (Int8) Rescoring Retrieval Speed Performance Summarization Demo Try it yourself Future work: Acknowledgments Citation References Why Embeddings? Embeddings are one of the most versatile tools in natural language processing, supporting a wide variety of settings and use cases. In essence, embeddings are numerical representations of more complex objects, like text, images, audio, etc. Specifically, the objects are represented as n-dimensional vectors. After transforming the complex objects, you can determine ...

![[2603.16430] EngGPT2: Sovereign, Efficient and Open Intelligence](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)