Blocking AI crawlers doesn't stop citations - new data shows why

About this article

New BuzzStream data from 4 million AI citations shows blocking AI crawlers rarely stops ChatGPT or Gemini from citing publisher content - here is why.

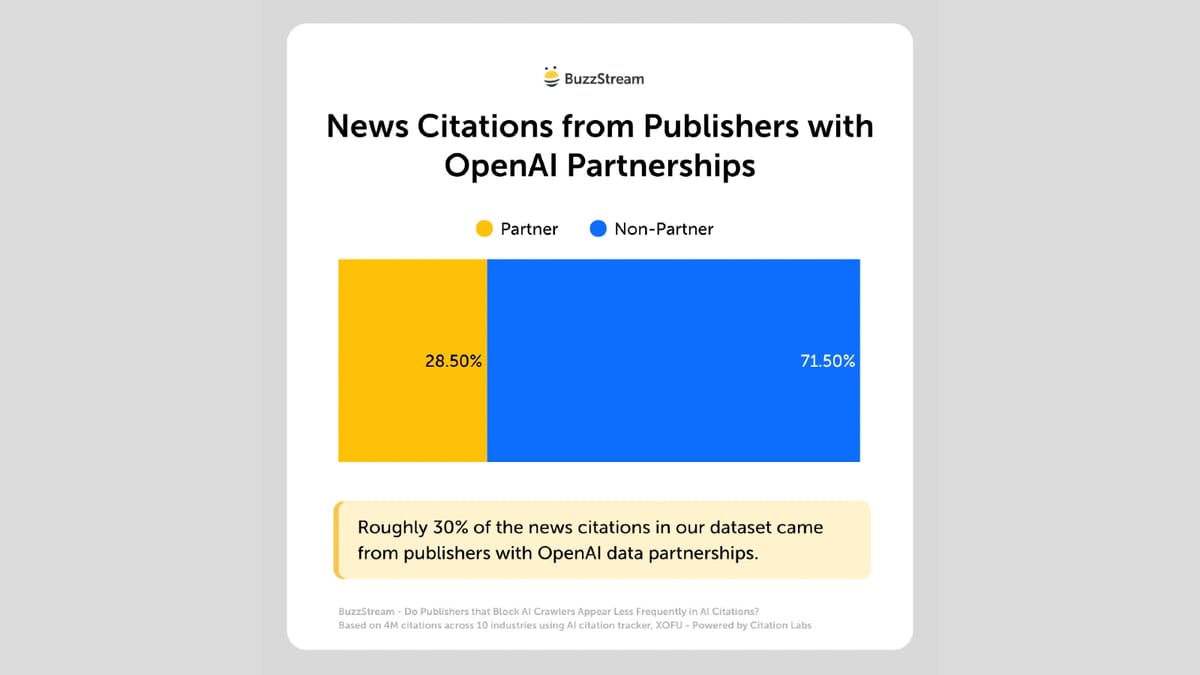

Blocking AI bots with a robots.txt file has become a reflex for many publishers. But a new study published on March 19, 2026, by BuzzStream challenges the assumption that blocking crawlers translates into fewer AI citations. The findings, drawn from 4 million citations across 3,600 prompts, suggest the relationship between crawler access and AI citation is far weaker than most publishers believe.The research, authored by Vince Nero, Director of Content Marketing at BuzzStream, used data from Citation Labs' AI citation-tracking tool, XOFU. The dataset covered ChatGPT, Gemini, Google AI Overviews, and Google AI Mode, spanning 10 industries. It is the third installment in a multi-part series examining how AI systems select and surface news content.The core numbers are striking. Among the top 50 news sites blocking ChatGPT's live retrieval bot, ChatGPT-User, 70.6% still appeared in the dataset's AI citations. Sites blocking OAI-SearchBot - the bot associated with ChatGPT's search features - still appeared in 82.4% of cases. For GPTBot, the training crawler, 88.2% of blocking sites were cited anyway.On the Google side, 92.3% of sites blocking Google-Extended, the training bot used for Gemini, still appeared in citations. None of the studied sites blocked Googlebot itself, since doing so would also remove them from standard Google Search results - a trade-off no publisher has been willing to make.What the bots actually doTo understand why these numbers matter, it helps to separa...

![[2603.17677] Adaptive Guidance for Retrieval-Augmented Masked Diffusion Models](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)