Can Claude Opus 4.7 and Ensemble AI Models Finally Make Code Review Reliable?

About this article

Ensemble AI models like Claude Opus 4.7 transform code review reliability. Discover how multi-model approaches catch subtle bugs human reviewers miss

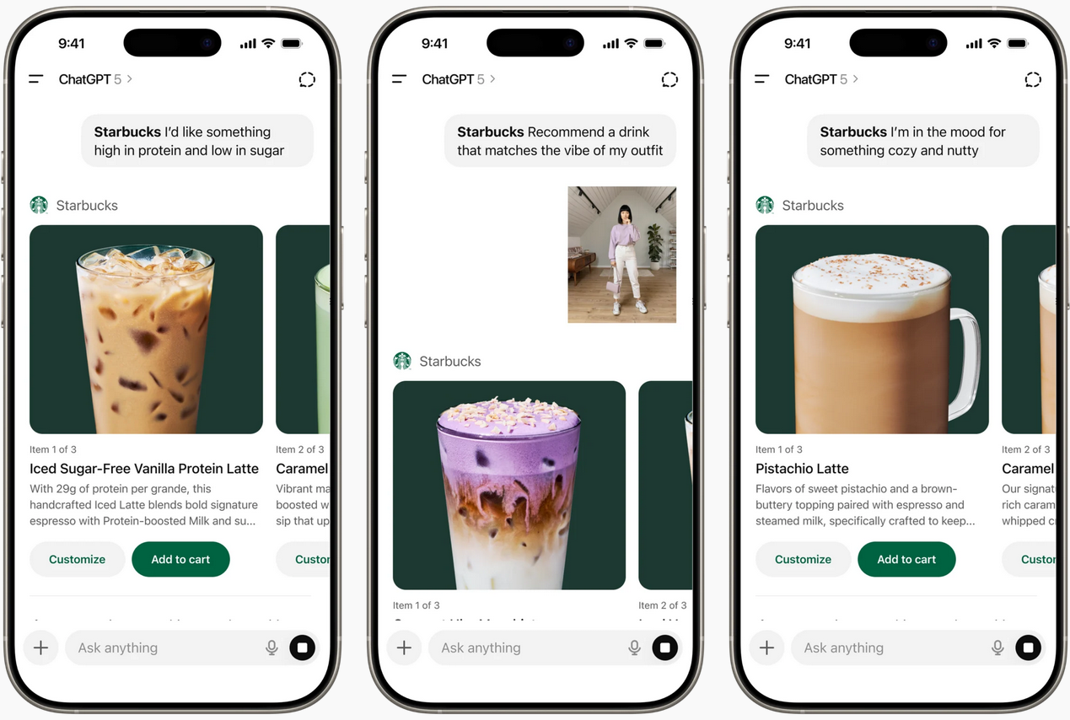

Skip to content Market Coverage News April 18, 2026 FuturumAI Can Claude Opus 4.7 and Ensemble AI Models Finally Make Code Review Reliable? AI Platforms, Enterprise Software & Digital Workflows, Software Lifecycle Engineering Publication Date: April 18, 2026 CodeRabbit has integrated Claude Opus 4.7 into its AI code review engine, using an ensemble of frontier models to target gaps that human reviewers often miss, such as subtle race conditions and deep-file bugs [1]. This approach raises the bar for automated code review, but also forces enterprises to rethink trust, reliability, and the operational risks of AI-driven development. According to Futurum Group's 1H 2026 Software Engineering Decision Maker Survey (n=828), 40.2% of organizations now see investing in GenAI for code generation, testing, and AI agents as their most critical action for accelerating software delivery. What is Covered in this Article Claude Opus 4.7 integration into CodeRabbit's ensemble AI code review system The shift from single-model to multi-model (ensemble) review pipelines Implications for software quality, developer trust, and operational risk Comparative perspectives on agentic AI versus pipeline AI in code review The News CodeRabbit has added Claude Opus 4.7 to its AI code review engine, moving beyond reliance on a single model to an ensemble approach that benchmarks and selects the best model for each aspect of the review process [1]. The system evaluates new frontier models as they are re...