Distributed Training: Train BART/T5 for Summarization using 🤗 Transformers and Amazon SageMaker

About this article

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

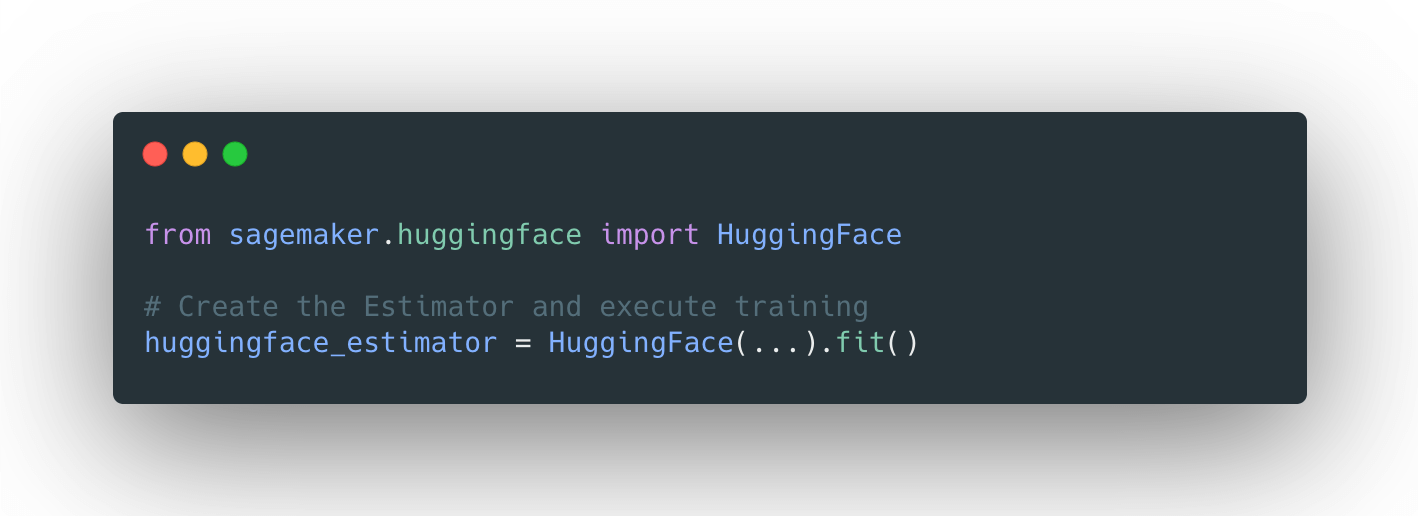

Back to Articles Distributed Training: Train BART/T5 for Summarization using 🤗 Transformers and Amazon SageMaker Published April 8, 2021 Update on GitHub Upvote 2 Philipp Schmid philschmid Follow In case you missed it: on March 25th we announced a collaboration with Amazon SageMaker to make it easier to create State-of-the-Art Machine Learning models, and ship cutting-edge NLP features faster. Together with the SageMaker team, we built 🤗 Transformers optimized Deep Learning Containers to accelerate training of Transformers-based models. Thanks AWS friends!🤗 🚀 With the new HuggingFace estimator in the SageMaker Python SDK, you can start training with a single line of code. The announcement blog post provides all the information you need to know about the integration, including a "Getting Started" example and links to documentation, examples, and features. listed again here: 🤗 Transformers Documentation: Amazon SageMaker Example Notebooks Amazon SageMaker documentation for Hugging Face Python SDK SageMaker documentation for Hugging Face Deep Learning Container If you're not familiar with Amazon SageMaker: "Amazon SageMaker is a fully managed service that provides every developer and data scientist with the ability to build, train, and deploy machine learning (ML) models quickly. SageMaker removes the heavy lifting from each step of the machine learning process to make it easier to develop high quality models." [REF] Tutorial We will use the new Hugging Face DLCs and Amazon S...

![[2603.25112] Do LLMs Know What They Know? Measuring Metacognitive Efficiency with Signal Detection Theory](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)