Fair human-centric image dataset for ethical AI benchmarking

Summary

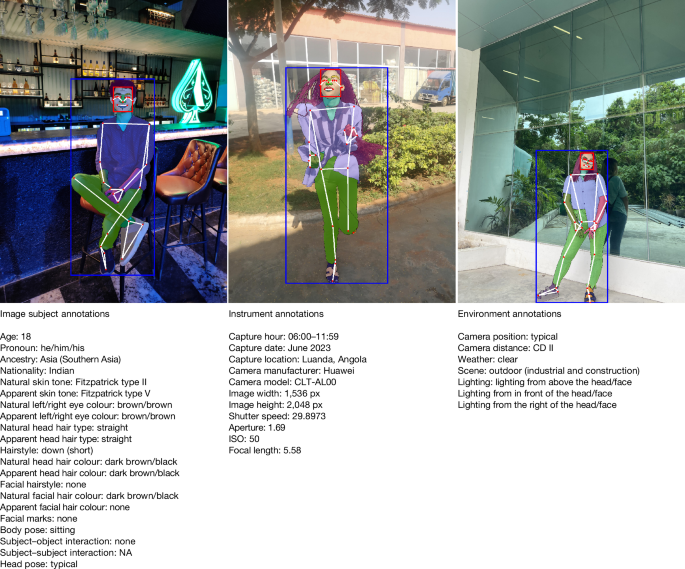

The Fair Human-Centric Image Benchmark (FHIBE) is a new dataset aimed at improving fairness in AI by addressing ethical concerns in data collection, including consent and diversity.

Why It Matters

As AI technologies increasingly rely on image datasets, addressing biases and ethical issues in data collection is crucial for developing trustworthy AI systems. FHIBE sets a new standard for fairness benchmarks, promoting responsible data practices that can enhance the accuracy and inclusivity of AI applications.

Key Takeaways

- FHIBE implements best practices for consent, privacy, and diversity in dataset collection.

- The dataset allows for nuanced bias diagnosis in various human-centric AI tasks.

- FHIBE aims to mitigate potential harms associated with biased AI models.

- It serves as a roadmap for responsible data curation in AI development.

- The introduction of FHIBE highlights the need for ethical considerations in AI datasets.

Download PDF Subjects Computer scienceDatabasesEthicsInterdisciplinary studies AbstractComputer vision is central to many artificial intelligence (AI) applications, from autonomous vehicles to consumer devices. However, the data behind such technical innovations are often collected with insufficient consideration of ethical concerns1,2,3. This has led to a reliance on datasets that lack diversity, perpetuate biases and are collected without the consent of data rights holders. These datasets compromise the fairness and accuracy of AI models and disenfranchise stakeholders4,5,6,7,8. Although awareness of the problems of bias in computer vision technologies, particularly facial recognition, has become widespread9, the field lacks publicly available, consensually collected datasets for evaluating bias for most tasks3,10,11. In response, we introduce the Fair Human-Centric Image Benchmark (FHIBE, pronounced âFeebeeâ), a publicly available human image dataset implementing best practices for consent, privacy, compensation, safety, diversity and utility. FHIBE can be used responsibly as a fairness evaluation dataset for many human-centric computer vision tasks, including pose estimation, person segmentation, face detection and verification, and visual question answering. By leveraging comprehensive annotations capturing demographic and physical attributes, environmental factors, instrument and pixel-level annotations, FHIBE can identify a wide variety of biases. The annotation...