Introducing 🤗 Accelerate

About this article

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

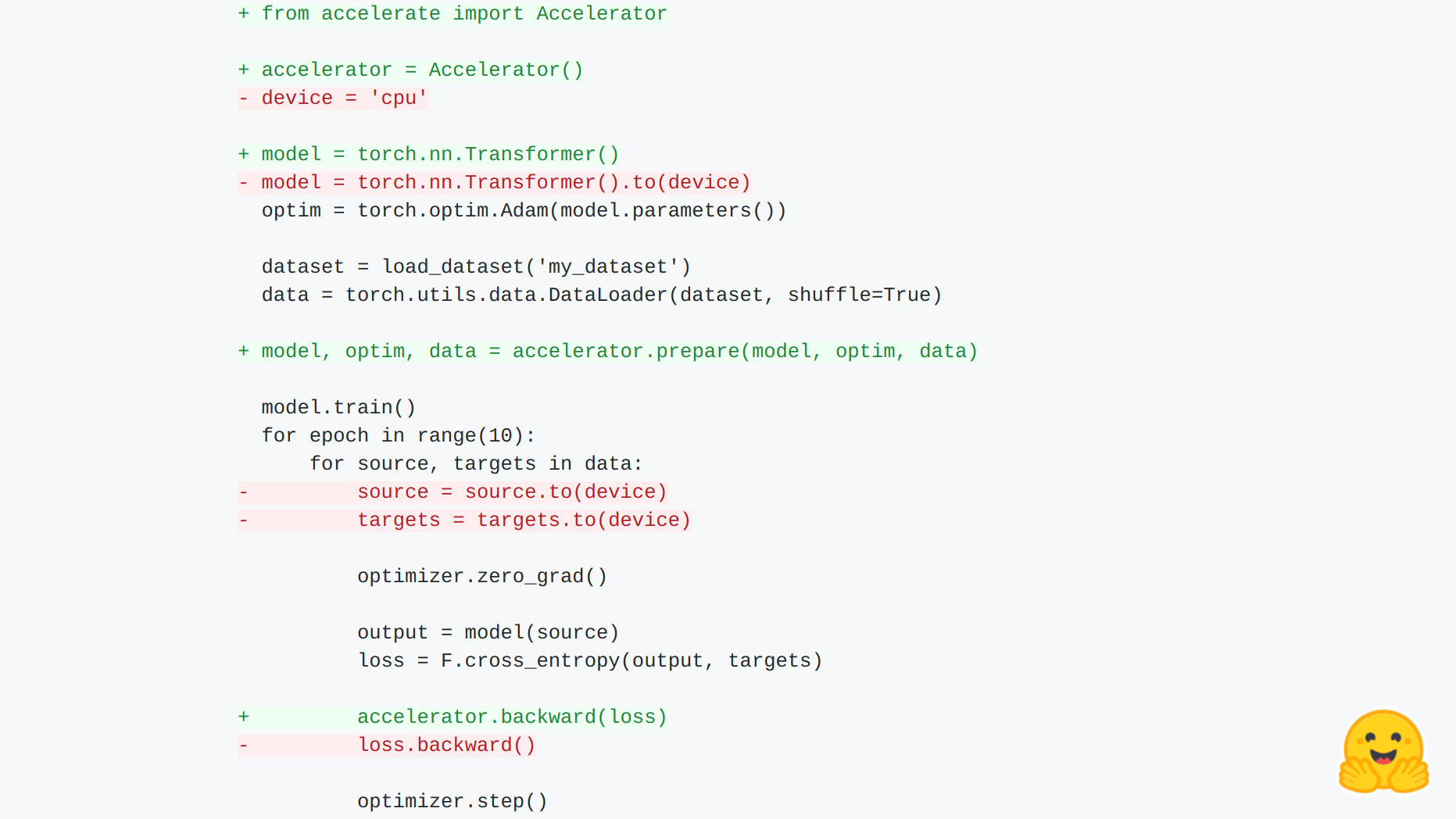

Back to Articles Introducing 🤗 Accelerate Published April 16, 2021 Update on GitHub Upvote 3 Sylvain Gugger sgugger Follow 🤗 Accelerate Run your raw PyTorch training scripts on any kind of device. Most high-level libraries above PyTorch provide support for distributed training and mixed precision, but the abstraction they introduce require a user to learn a new API if they want to customize the underlying training loop. 🤗 Accelerate was created for PyTorch users who like to have full control over their training loops but are reluctant to write (and maintain) the boilerplate code needed to use distributed training (for multi-GPU on one or several nodes, TPUs, ...) or mixed precision training. Plans forward include support for fairscale, deepseed, AWS SageMaker specific data-parallelism and model parallelism. It provides two things: a simple and consistent API that abstracts that boilerplate code and a launcher command to easily run those scripts on various setups. Easy integration! Let's first have a look at an example: import torch import torch.nn.functional as F from datasets import load_dataset + from accelerate import Accelerator + accelerator = Accelerator() - device = 'cpu' + device = accelerator.device model = torch.nn.Transformer().to(device) optim = torch.optim.Adam(model.parameters()) dataset = load_dataset('my_dataset') data = torch.utils.data.DataLoader(dataset, shuffle=True) + model, optim, data = accelerator.prepare(model, optim, data) model.train() for epoch ...

![[2603.25112] Do LLMs Know What They Know? Measuring Metacognitive Efficiency with Signal Detection Theory](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)