Pete Hegseth and the AI Doomsday Machine

Summary

The article discusses the clash between AI regulation advocates and corporate interests, highlighting Pete Hegseth's role in opposing sensible AI oversight amid rising concerns about AI's potential dangers.

Why It Matters

As AI technology rapidly advances, the lack of regulation poses significant risks, including mass surveillance and autonomous weapons. Understanding the political and corporate dynamics at play is crucial for shaping future AI policies and ensuring safety.

Key Takeaways

- Pete Hegseth represents corporate interests opposing AI regulation.

- Anthropic advocates for strict AI safety measures to prevent misuse.

- The AI industry is heavily influenced by political donations and lobbying.

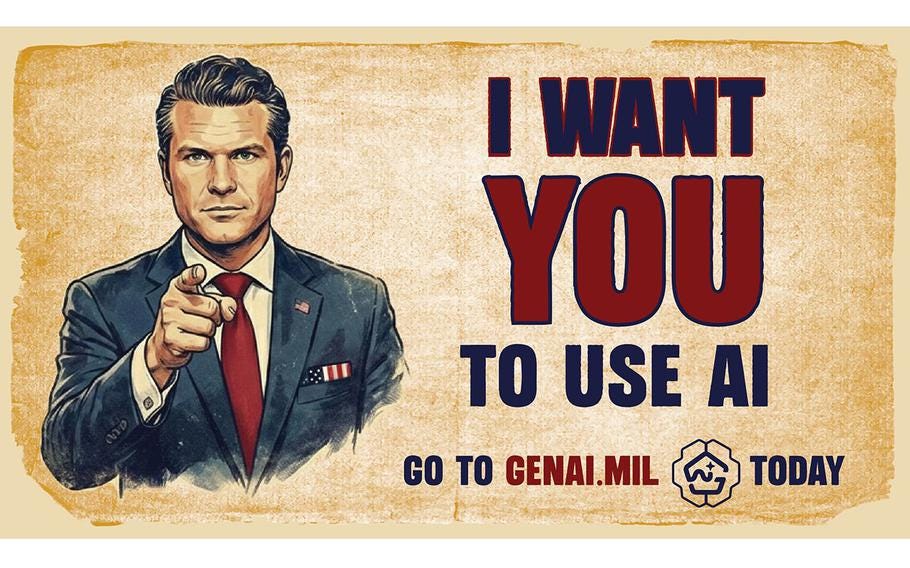

Pete Hegseth and the AI Doomsday MachineTwo forces are stopping sensible regulation of AI. He's one of them. Robert ReichFeb 25, 20261,589146405ShareFriends,Which is more important to you? Allowing Pete Hegseth to use artificial intelligence (AI) however he wants, OR preventing AI from doing mass surveillance of Americans and creating lethal weapons without human oversight?That’s the stark choice posed by the intensifying fight between an AI corporation called Anthropic and Pete Hegseth, Trump’s Secretary of “War.” AI is dangerous as hell. I view it as one of the four existential crises America now faces — along with climate change, widening inequality, and the destruction of our democracy. To be sure, AI is capable of changing human life for the better. But if unregulated, it could be a destructive nightmare — giving government the power to know everything about us and suppress all dissent, distorting news and media to the point where no one can distinguish between lies and truth, and threatening human beings with bots that could decide we’re unnecessary obstacles to their taking over the earth. Now is the time we should be putting guardrails in place. But two forces are making this difficult if not impossible. The first is corporate greed, which is why OpenAI, Elon Musk’s xAI, and Google have jettisoned all precautions. Several AI researchers have left AI companies in recent weeks, warning that safety and other considerations are being pushed aside as their corporations ...

![[2511.16417] Pharos-ESG: A Framework for Multimodal Parsing, Contextual Narration, and Hierarchical Labeling of ESG Report](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)