The Interstate of Science: Merging Neuroscience and AI

Summary

The article explores the intersection of neuroscience and artificial intelligence, highlighting how AI technologies are enhancing our understanding of brain functions and accelerating research in neuroscience.

Why It Matters

As AI continues to evolve, its integration with neuroscience offers significant potential for breakthroughs in understanding brain mechanisms. This synergy not only advances scientific inquiry but also informs the development of more sophisticated AI systems, making it a crucial area of study for both fields.

Key Takeaways

- AI is not yet capable of replicating the complexity of the human brain but is instrumental in advancing neuroscience research.

- Neuroscientists are leveraging AI to create better computational models that predict brain responses to stimuli.

- Collaborative efforts between AI and neuroscience are leading to faster discoveries in understanding brain functions and diseases.

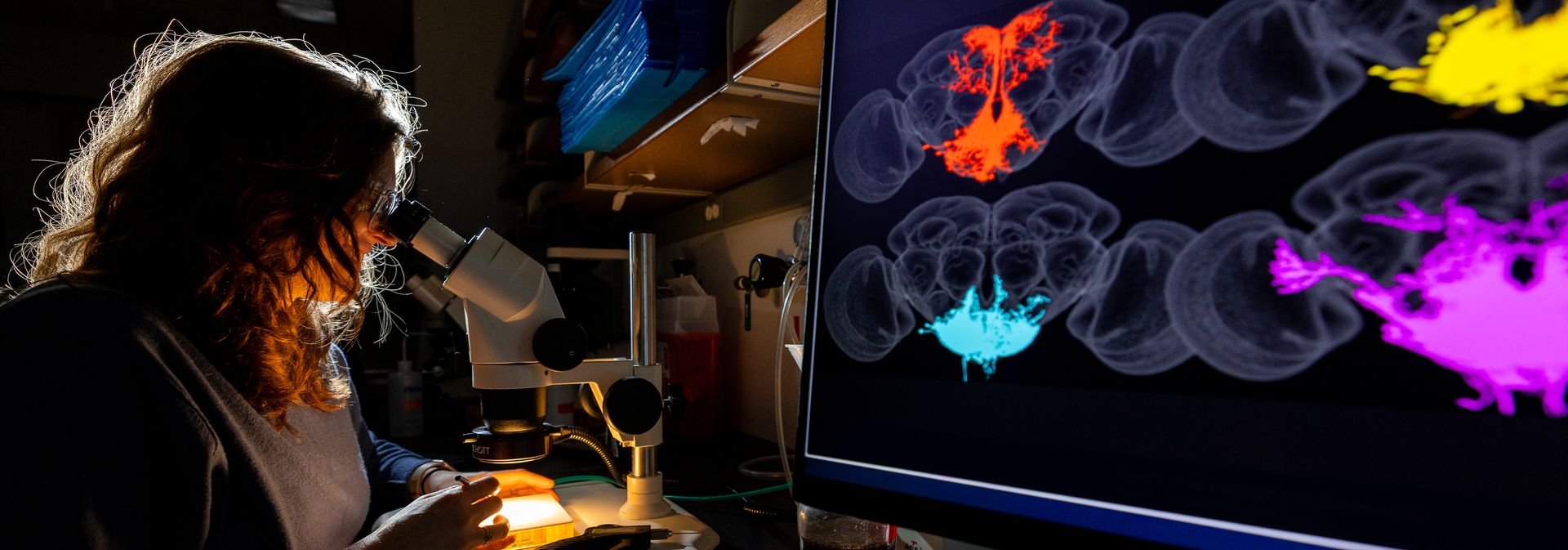

Enter a world of possibilities: generate ideas, get answers, have conversations, create endless images. Often, artificial intelligence or large language models, like ChatGPT, Gemini, or Claude, can seem almost human-like. But this technology is still far from accurately representing the human brain. No matter how sophisticated the tools seem, AI does not think for itself, nor are its connections as deeply complex as the brain. However, AI is in an important feedback loop with Neuroscience. Advancing the questions being asked, accelerating technology, and fostering discovery. Building Brain Tools Our senses, emotions, and memories make us uniquely human. How we take in information from the outside world and process it through our eyes, ears, nose, mouth, and fingertips is still very much a mystery that neuroscientists and other researchers are trying to solve, and as we learn more, better model systems can be built to answer new questions at an accelerated rate. Discovering how neurons in the auditory cortex respond to and process sound to build better computational models of speech aligns with individual neuron research being conducted by Samuel Norman-Haignere, PhD, an associate professor of Neuroscience and Biostatistics at the University of Rochester. Norman-Haignere’s research is leveraging techniques from AI to build better computational models that can predict how the human brain codes complex sounds such as speech and music. His lab collects precise, largescale data...