AI Body Gap: Why Robots Need "Internal Feelings" to be Safe

About this article

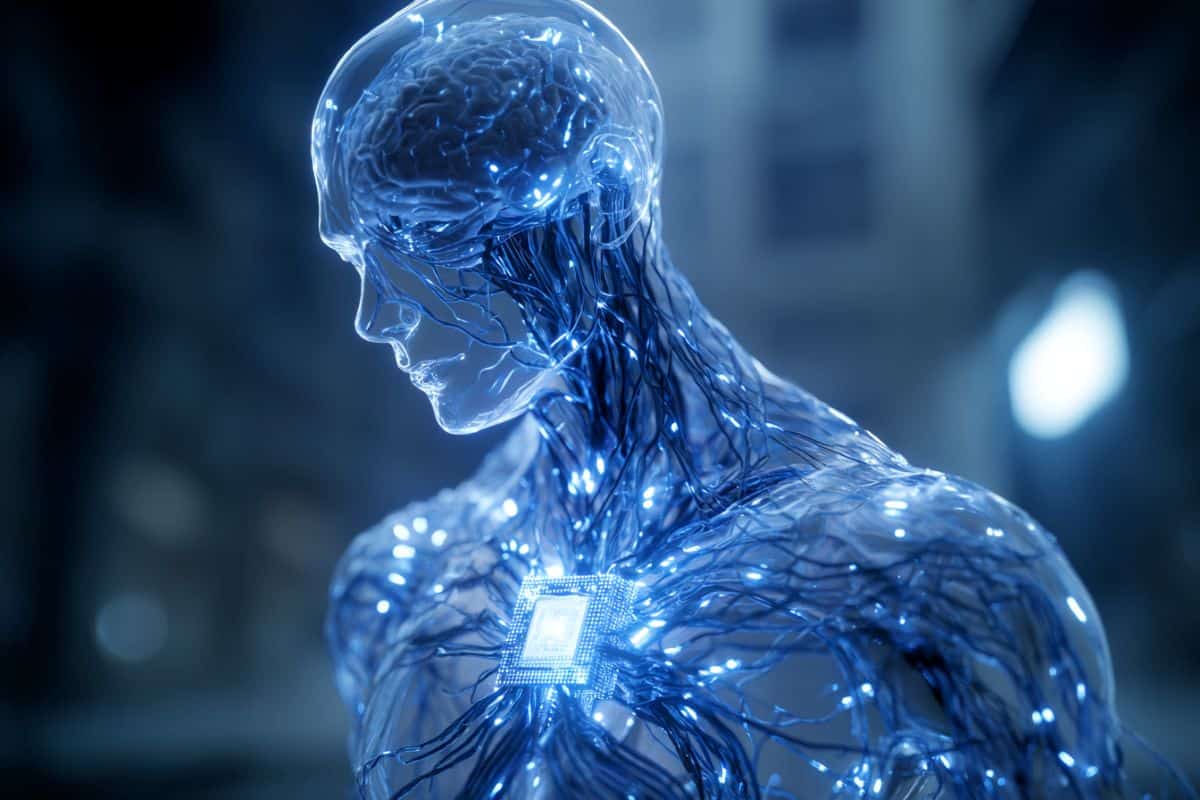

Why is AI overconfident? A new study explores "internal embodiment," the missing link in AI safety. Researchers explain how a lack of internal "body" states prevents AI from understanding human context and avoiding errors.

Summary: When you reach for a saltshaker, your brain isn’t just calculating coordinates; it’s listening to your body’s sense of balance, the friction on your skin, and your internal level of thirst or fatigue. A provocative new study argues that current AI models like ChatGPT and Gemini are fundamentally flawed because they lack “internal embodiment.”While AI can describe a glass of water perfectly, it has no internal state of “thirst” to regulate its behavior. Researchers argue that without these internal “vulnerabilities” and self-regulators, AI will remain prone to overconfident errors and struggle to truly align with human values.Key FactsThe Missing Ingredient: The study distinguishes between External Embodiment (interacting with the physical world) and Internal Embodiment (the constant monitoring of internal states like fatigue, uncertainty, or need).The Perceptual Test: Researchers tested leading AI models using “point-light displays” (dots that suggest a human figure). While even human newborns recognize the person, AI models failed, sometimes describing the dots as a “constellation of stars.”Safety via Vulnerability: In humans, the body acts as a built-in safety system. If we are “depleted” or “uncertain,” our body registers it. AI lacks this “internal cost,” meaning it has no intrinsic reason to avoid being overconfident when it’s actually guessing.The Dual-Embodiment Framework: UCLA researchers propose a new architecture for AI that tracks “synthetic” internal s...