NIST Identifies Types of Cyberattacks That Manipulate Behavior of AI Systems

Summary

NIST outlines various cyberattack types that exploit vulnerabilities in AI systems, emphasizing the need for improved mitigation strategies against adversarial machine learning threats.

Why It Matters

As AI systems become integral to various sectors, understanding the potential for adversarial attacks is crucial. This publication by NIST provides essential insights into vulnerabilities and encourages the development of more effective defenses, which is vital for ensuring the reliability and safety of AI applications.

Key Takeaways

- NIST identifies several types of adversarial attacks on AI systems.

- Current mitigation strategies lack robust assurances against these threats.

- The publication encourages collaboration among developers to enhance AI defenses.

- AI systems can be compromised through untrustworthy data during training and operation.

- Understanding these vulnerabilities is essential for the safe deployment of AI technologies.

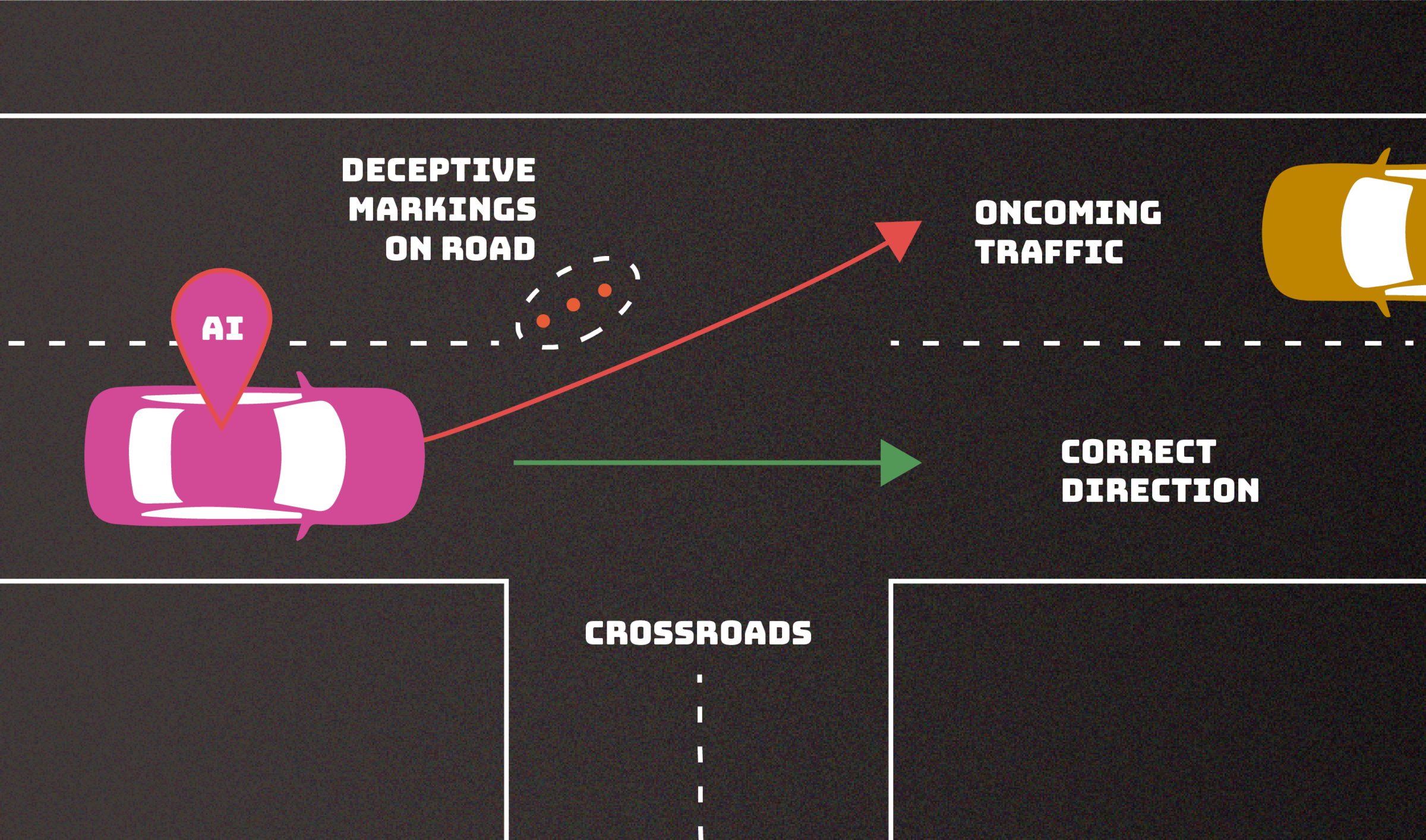

Skip to main content Official websites use .gov A .gov website belongs to an official government organization in the United States. Secure .gov websites use HTTPS A lock ( Lock A locked padlock ) or https:// means you’ve safely connected to the .gov website. Share sensitive information only on official, secure websites. https://www.nist.gov/news-events/news/2024/01/nist-identifies-types-cyberattacks-manipulate-behavior-ai-systems NEWS NIST Identifies Types of Cyberattacks That Manipulate Behavior of AI Systems Publication lays out “adversarial machine learning” threats, describing mitigation strategies and their limitations. January 4, 2024 Share Facebook Linkedin X.com Email AI systems can malfunction when exposed to untrustworthy data, and attackers are exploiting this issue. New guidance documents the types of these attacks, along with mitigation approaches. No foolproof method exists as yet for protecting AI from misdirection, and AI developers and users should be wary of any who claim otherwise. An AI system can malfunction if an adversary finds a way to confuse its decision making. In this example, errant markings on the road mislead a driverless car, potentially making it veer into oncoming traffic. This “evasion” attack is one of numerous adversarial tactics described in a new NIST publication intended to help outline the types of attacks we might expect along with approaches to mitigate them. Credit: N. Hanacek/NIST Adversaries can deliberately confuse or even “po...

![[2601.15356] Q-Probe: Scaling Image Quality Assessment to High Resolution via Context-Aware Agentic Probing](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)