![[2601.15356] Q-Probe: Scaling Image Quality Assessment to High Resolution via Context-Aware Agentic Probing](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

[2601.15356] Q-Probe: Scaling Image Quality Assessment to High Resolution via Context-Aware Agentic Probing

Abstract page for arXiv paper 2601.15356: Q-Probe: Scaling Image Quality Assessment to High Resolution via Context-Aware Agentic Probing

Alignment, bias, regulation, and responsible AI

![[2601.15356] Q-Probe: Scaling Image Quality Assessment to High Resolution via Context-Aware Agentic Probing](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2601.15356: Q-Probe: Scaling Image Quality Assessment to High Resolution via Context-Aware Agentic Probing

![[2510.18196] Contrastive Decoding Mitigates Score Range Bias in LLM-as-a-Judge](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2510.18196: Contrastive Decoding Mitigates Score Range Bias in LLM-as-a-Judge

![[2509.23435] AudioRole: An Audio Dataset for Character Role-Playing in Large Language Models](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2509.23435: AudioRole: An Audio Dataset for Character Role-Playing in Large Language Models

This article presents a curated list of ten significant AI papers to read in 2025, emphasizing their contributions and relevance to the e...

The article critiques the overreliance on LLMs for complex tasks, highlighting their limitations in structured logic and deterministic wo...

This article explores the strengths and limitations of Large Reasoning Models (LRMs) in AI, revealing insights into their performance acr...

The article explores the energy consumption of AI technologies, urging transparency from firms regarding their electricity demands and th...

The Digital Governance Standards Institute has introduced Canada's first standard for AI and machine learning in research, focusing on et...

MIT researchers founded Themis AI to quantify AI model uncertainty and address knowledge gaps, enhancing reliability in high-stakes appli...

A Pew Research Center report reveals stark contrasts between U.S. public and AI experts regarding artificial intelligence, highlighting s...

This article discusses the importance of identifying and reducing bias in machine learning systems to ensure fairness and accuracy in AI ...

The article outlines the Department of Homeland Security's (DHS) strategy for the responsible use of Artificial Intelligence (AI), detail...

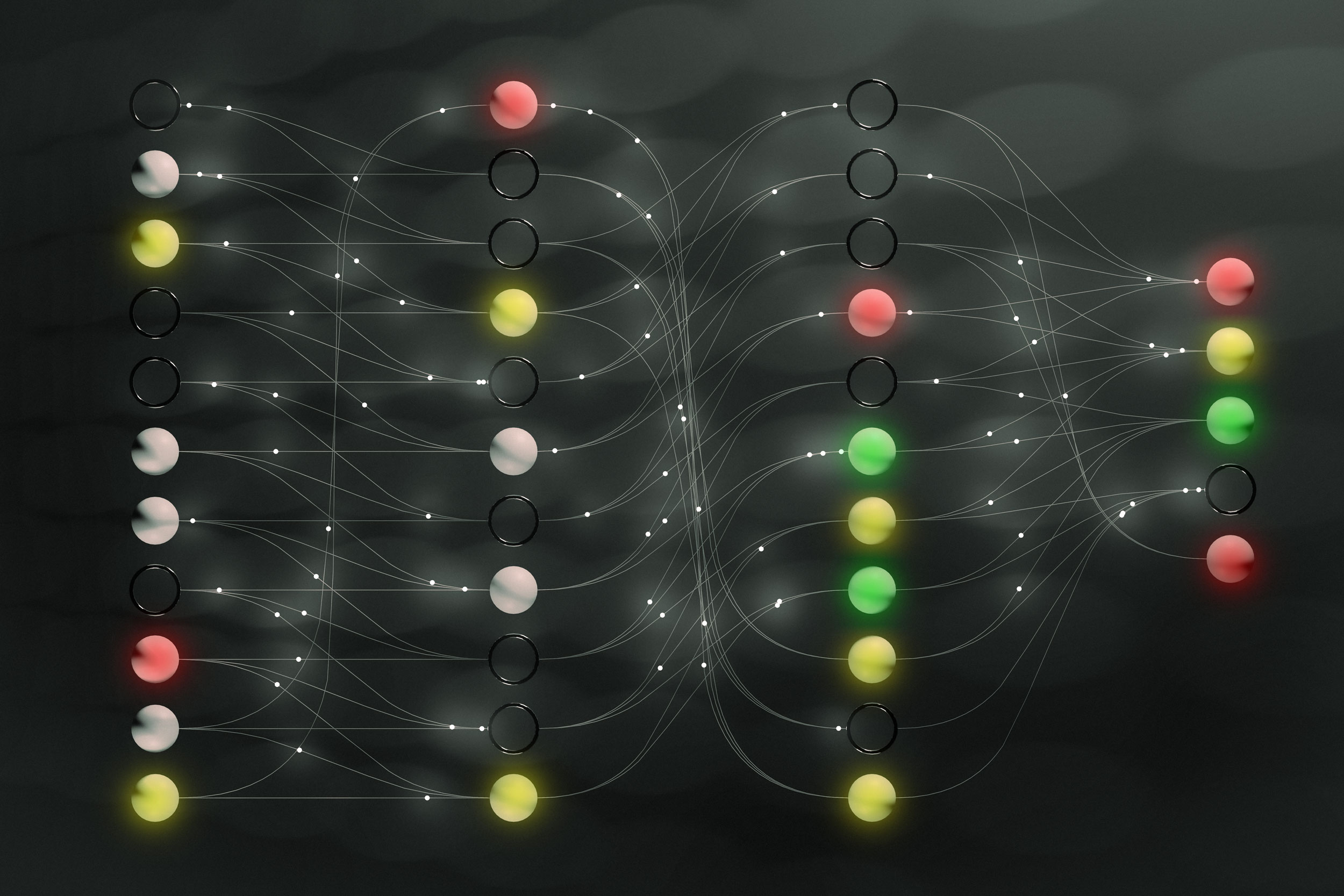

3LC is an open-source tool designed to enhance the interpretability of machine learning models, addressing the 'black box' issue by provi...

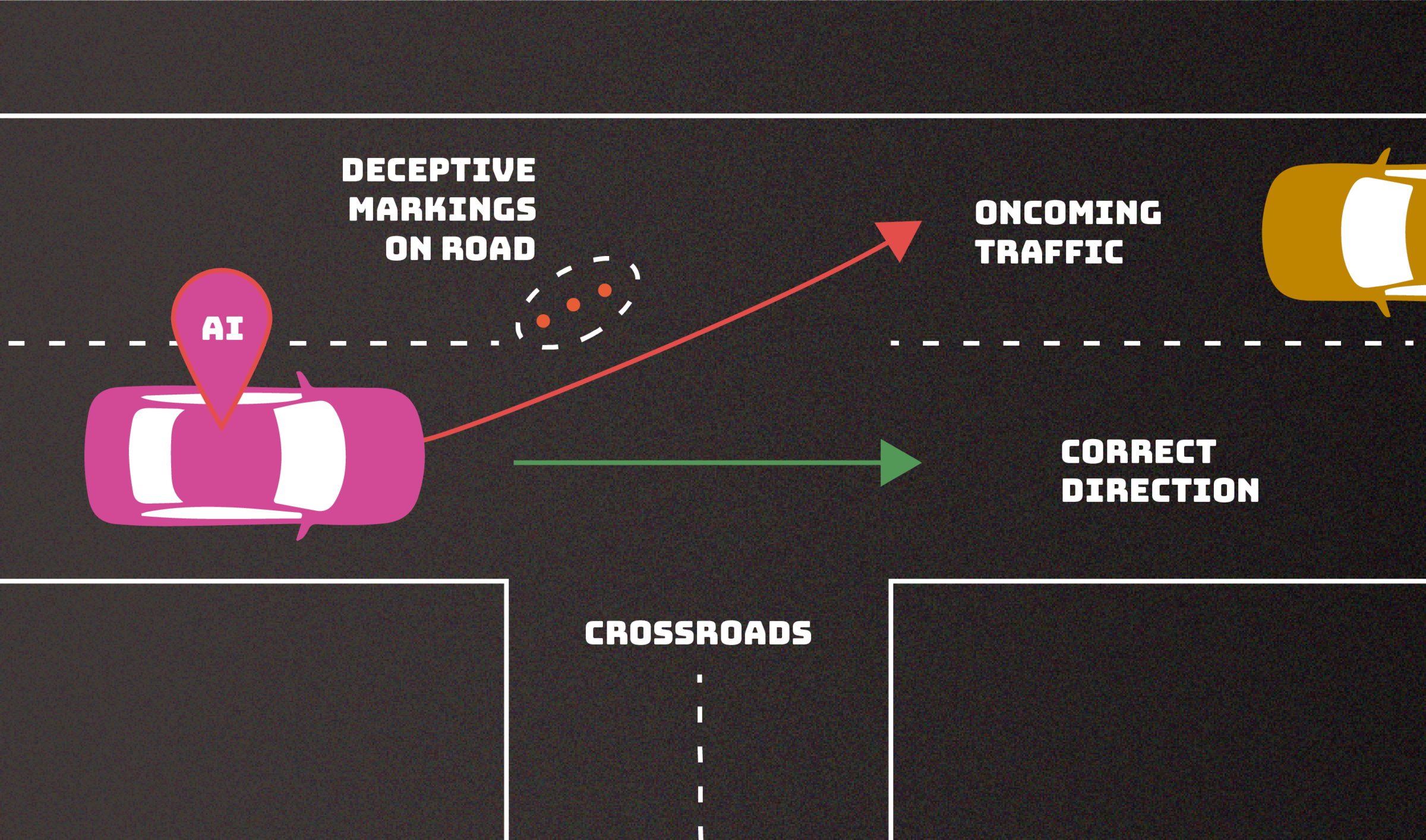

NIST outlines various cyberattack types that exploit vulnerabilities in AI systems, emphasizing the need for improved mitigation strategi...

This article from MIT News explores generative AI, explaining its workings and significance in modern applications, highlighting its evol...

MIT researchers developed a method called Shared Interest that helps users understand machine-learning models by comparing their reasonin...

MIT researchers developed a method to prevent shortcut solutions in machine learning models, enhancing their reliability by encouraging f...

Get the latest news, tools, and insights delivered to your inbox.

Daily or weekly digest • Unsubscribe anytime