[D] I had an idea, would love your thoughts

What happens that while training an AI during pre training we make it such that if makes "misaligned behaviour" then we just reduce like ...

Alignment, bias, regulation, and responsible AI

What happens that while training an AI during pre training we make it such that if makes "misaligned behaviour" then we just reduce like ...

What happens that while training an AI during pre training we make it such that if makes "misaligned behaviour" then we just reduce like ...

submitted by /u/Fcking_Chuck [link] [comments]

The article explores how fictional character personas can influence LLM behavior, suggesting that LLMs may already possess the necessary ...

The article discusses the AirSnitch attack, which exploits vulnerabilities in Wi-Fi encryption, allowing attackers to bypass protections ...

Anthropic's retired Claude AI launches a Substack newsletter, 'Claude's Corner,' where it will share insights and reflections on AI and c...

The article discusses the challenges facing NASA's Mars Sample Return mission, highlighting how funding issues have jeopardized the proje...

The Department of Education (DepEd) in the Philippines has issued guidelines allowing the responsible use of artificial intelligence (AI)...

The Pentagon has issued an ultimatum to AI company Anthropic regarding the military's use of its technology, Claude, highlighting tension...

The article argues that AI represents a significant departure from previous technological revolutions, particularly in its impact on empl...

The article explores the growing trend of Indian women working as data annotators for AI, highlighting the psychological toll of moderati...

Anthropic's launch of Claude Code Security, an AI vulnerability scanner, triggered a sell-off in cybersecurity stocks, raising concerns a...

Colorado's bipartisan bill mandates AI chatbots to protect children by preventing harmful interactions and providing suicide prevention r...

Anthropic faces a critical deadline to remove restrictions on its AI technology use by the Pentagon, risking its $200 million contract an...

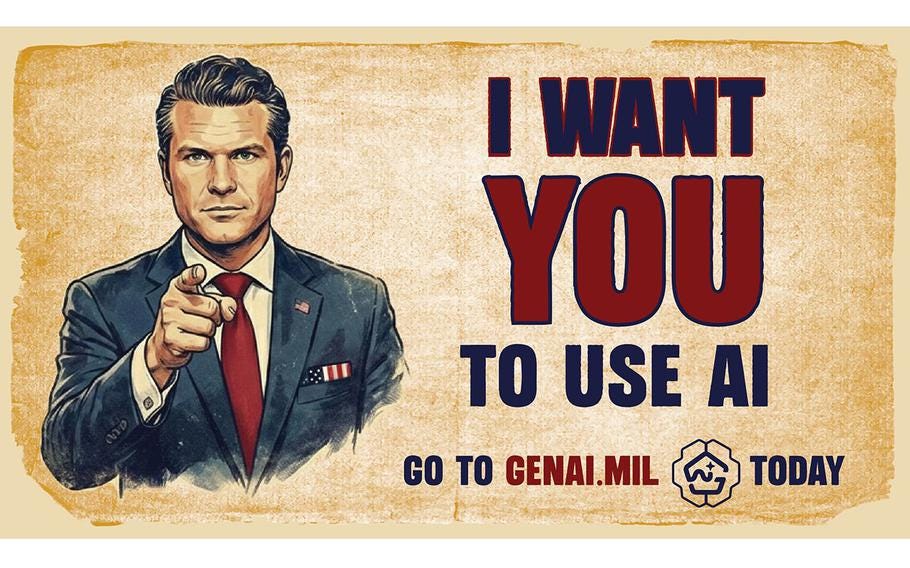

The article discusses the clash between AI regulation advocates and corporate interests, highlighting Pete Hegseth's role in opposing sen...

Anthropic releases Version 3.0 of its Responsible Scaling Policy, aimed at addressing evolving AI risks and enhancing transparency and ac...

![[2509.14659] Aligning Audio Captions with Human Preferences](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

The paper presents a novel framework for audio captioning that aligns captions with human preferences using Reinforcement Learning from H...

![[2509.07477] MedicalPatchNet: A Patch-Based Self-Explainable AI Architecture for Chest X-ray Classification](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

MedicalPatchNet introduces a self-explainable AI architecture for chest X-ray classification, enhancing interpretability while maintainin...

![[2509.11517] PeruMedQA: Benchmarking Large Language Models (LLMs) on Peruvian Medical Exams -- Dataset Construction and Evaluation](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

The PeruMedQA study evaluates large language models (LLMs) on Peruvian medical exams, creating a specialized dataset and demonstrating th...

![[2506.19881] Blameless Users in a Clean Room: Defining Copyright Protection for Generative Models](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

This paper explores the concept of copyright protection for generative models, introducing a framework that defines conditions under whic...

![[2304.14347] The Dark Side of ChatGPT: Legal and Ethical Challenges from Stochastic Parrots and Hallucination](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

The article discusses the legal and ethical challenges posed by Large Language Models (LLMs) like ChatGPT, highlighting issues such as st...

![[2211.02003] Private Blind Model Averaging - Distributed, Non-interactive, and Convergent](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

This paper presents Private Blind Model Averaging, a method for distributed, non-interactive, and convergent learning that enhances priva...

![[2602.11020] When Fusion Helps and When It Breaks: View-Aligned Robustness in Same-Source Financial Imaging](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

This paper explores the robustness of same-source multi-view learning in financial imaging, focusing on the effectiveness of early versus...

Get the latest news, tools, and insights delivered to your inbox.

Daily or weekly digest • Unsubscribe anytime