it is impossible to stop AI chatbots from using quotes (any instance of the character ")

no matter how i phrase it in the instructions, how many times i repeat the rule not to use quotes, and which LLM i use, i have failed to ...

GPT, Claude, Gemini, and other LLMs

no matter how i phrase it in the instructions, how many times i repeat the rule not to use quotes, and which LLM i use, i have failed to ...

I am trying to convert XQuery statements into SQL queries within an enterprise context, with the constraint that the solution must rely...

...realities like memory management, highlight a longer road to resilient AI Agents and AGI

![[2603.02236] CUDABench: Benchmarking LLMs for Text-to-CUDA Generation](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2603.02236: CUDABench: Benchmarking LLMs for Text-to-CUDA Generation

![[2603.02540] A Neuropsychologically Grounded Evaluation of LLM Cognitive Abilities](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2603.02540: A Neuropsychologically Grounded Evaluation of LLM Cognitive Abilities

![[2603.02528] LLM-MLFFN: Multi-Level Autonomous Driving Behavior Feature Fusion via Large Language Model](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2603.02528: LLM-MLFFN: Multi-Level Autonomous Driving Behavior Feature Fusion via Large Language Model

![[2603.02504] NeuroProlog: Multi-Task Fine-Tuning for Neurosymbolic Mathematical Reasoning via the Cocktail Effect](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2603.02504: NeuroProlog: Multi-Task Fine-Tuning for Neurosymbolic Mathematical Reasoning via the Cocktail E...

![[2603.02232] Beyond Binary Preferences: A Principled Framework for Reward Modeling with Ordinal Feedback](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2603.02232: Beyond Binary Preferences: A Principled Framework for Reward Modeling with Ordinal Feedback

![[2603.02473] Diagnosing Retrieval vs. Utilization Bottlenecks in LLM Agent Memory](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2603.02473: Diagnosing Retrieval vs. Utilization Bottlenecks in LLM Agent Memory

![[2603.02435] VL-KGE: Vision-Language Models Meet Knowledge Graph Embeddings](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2603.02435: VL-KGE: Vision-Language Models Meet Knowledge Graph Embeddings

![[2603.02229] Safety Training Persists Through Helpfulness Optimization in LLM Agents](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2603.02229: Safety Training Persists Through Helpfulness Optimization in LLM Agents

![[2603.02228] Neural Paging: Learning Context Management Policies for Turing-Complete Agents](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2603.02228: Neural Paging: Learning Context Management Policies for Turing-Complete Agents

![[2603.02240] SuperLocalMemory: Privacy-Preserving Multi-Agent Memory with Bayesian Trust Defense Against Memory Poisoning](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2603.02240: SuperLocalMemory: Privacy-Preserving Multi-Agent Memory with Bayesian Trust Defense Against Mem...

![[2603.02239] Engineering Reasoning and Instruction (ERI) Benchmark: A Large Taxonomy-driven Dataset for Foundation Models and Agents](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2603.02239: Engineering Reasoning and Instruction (ERI) Benchmark: A Large Taxonomy-driven Dataset for Foun...

![[2603.02222] MedCalc-Bench Doesn't Measure What You Think: A Benchmark Audit and the Case for Open-Book Evaluation](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2603.02222: MedCalc-Bench Doesn't Measure What You Think: A Benchmark Audit and the Case for Open-Book Eval...

![[2603.02221] MedFeat: Model-Aware and Explainability-Driven Feature Engineering with LLMs for Clinical Tabular Prediction](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2603.02221: MedFeat: Model-Aware and Explainability-Driven Feature Engineering with LLMs for Clinical Tabul...

![[2603.02219] NExT-Guard: Training-Free Streaming Safeguard without Token-Level Labels](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2603.02219: NExT-Guard: Training-Free Streaming Safeguard without Token-Level Labels

![[2603.02218] Self-Play Only Evolves When Self-Synthetic Pipeline Ensures Learnable Information Gain](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2603.02218: Self-Play Only Evolves When Self-Synthetic Pipeline Ensures Learnable Information Gain

![[2603.02216] ATPO: Adaptive Tree Policy Optimization for Multi-Turn Medical Dialogue](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2603.02216: ATPO: Adaptive Tree Policy Optimization for Multi-Turn Medical Dialogue

![[2603.02215] RxnNano:Training Compact LLMs for Chemical Reaction and Retrosynthesis Prediction via Hierarchical Curriculum Learning](https://arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png)

Abstract page for arXiv paper 2603.02215: RxnNano:Training Compact LLMs for Chemical Reaction and Retrosynthesis Prediction via Hierarchi...

Is Claude underperforming? It’s probably not the model—it’s your prompts. Discover the 7 specific strategies, from 'Few-Shot' prompting t...

Built a dataset scoring every testable claim from Marcus's 474 Substack posts. Two pipelines (Claude Opus 4.6 and ChatGPT Codex) analyzed...

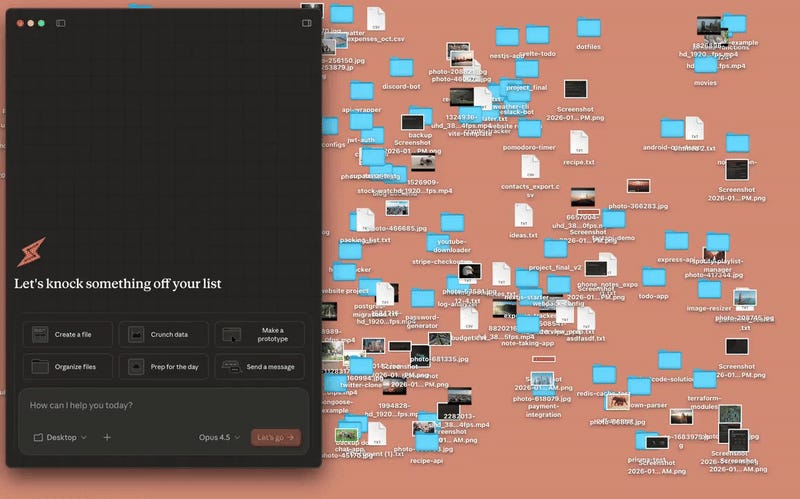

IBM is acquiring Confluent to enhance its AI and cloud services for enterprise clients, while Anthropic has launched Claude Code, a codin...

Get the latest news, tools, and insights delivered to your inbox.

Daily or weekly digest • Unsubscribe anytime